Our eyes allow us to see color, shapes, recognize our friends and detect motion. In this article, we break down the complex topic of signal capture and processing.

Initially, I was about to write an article on why color corrections and color management are a difficult thing to master. After doing some research on anatomy and signal processing I decided to do more research on it and after breaking down the rather complex topic sharing it with our readers.

Please also consider reading my article on why color corrections are hard to master and what we can do to overcome those struggles.

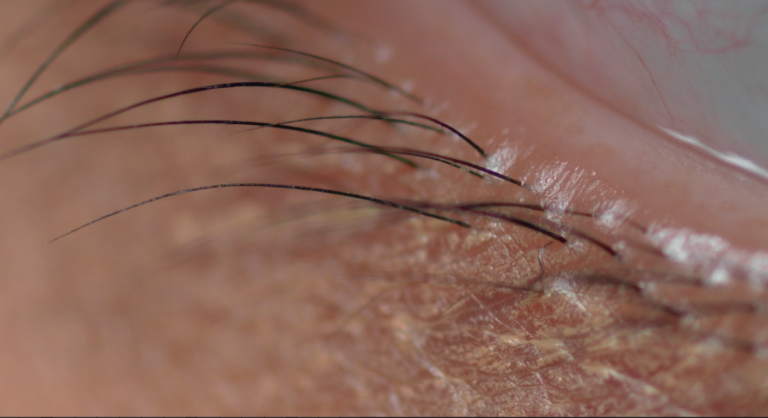

Differences In Photoreceptors

Rods

Rods make out the majority of photoreceptors, in fact, 20 times more than we have cones, but are not receptive to colored light but light protons in general. They are to be found more to the outer edges of the eye’s retina and therefore are used to measure the overall light amount, peripheral- and night vision.

Cones

While humans have around 120 million rods spreading throughout our eye’s retina, cones make up only 6-7% of all receptors, however, they can almost exclusively be found in the most sensitive part of our retina. Cones are responsible for our color perception, clear vision, and perception of detail.

Whilst rods are not in particular differentiable in their sensitivity to different wavelengths, cones are specialized to be super sensitive to either RED, GREEN or BLUE light. The amount of each type of specialized cones is approximately R60% G30% B10%.

Read more on how this affects channels in Photoshop.

Firing Rate And Action Potential

Signal processing is done how it is done in every other nerve cell. It is a chemical process of pumping K+ and Na+ out and into the cell. A signal is fired after reaching a certain firing potential. For a cell to fire again, this process has to be reversed and always leaves a certain time for the cell to be unable to create another signal.

Rods and cones are very different in their ability to fire these signals. Rods process our luminance perception and are comparably slow in regaining their action potential. You probably have been looking into a very bright light before or remember going from a dark room straight outside into mid-day sun. Your eyes will have a hard time adjusting, not because our iris does not open and close super quickly but because the rods will overlay some sort of latent image over the signals then coming in from the cones, or vice versa.

Nerve Cell Firing Potential and Neurotransmitter release

You might have guessed it is the other way around for cones and you are right, cones are super fast when it comes to regaining their action potential. which allows us to constantly refresh the information we see at a super high rate and to a very high level of detail. Compared to a camera this process is similar to capturing motion with a slow or high shutter speed. Low refresh rate captured by cones would be like leaving the shutter open and only see a blurry image. Fast shutter speed would let you capture more detail and also take multiple shots per second.

Feature Detection And Parallel Processing

Before we can get into why our color memory is so bad, we have to talk about which Photoreceptors are mainly responsible for capturing images and how this affects what we perceive. We have learned before about firing rates and that cones are much quicker in firing again, this also means, the information will be refreshed many times more in a second than cones can fire another signal. Cones are mostly exclusively found on the Fovea, the part of the retina that allows us to capture the lightest and highest resolution. This is the part that is being used when our eyes focus on an image.

When talking about capturing signals on the retina, we also have to think about the further transfer of this information. When digging into this topic I found that there are two main pathways for this information to lead into our brains. At the same time our brain can do parallel information processing but depending on the activated signal path this will result in a different set of information being processed.

parallel processing

Different information that is processed:

- color

- spacial resolution

- form / shape

- detail / texture

- contrast detection / boundaries of objects

- motion

- tracking objects in space

Read more on Neural Correlates Of Objects vs. Spatial Visualization Abilities at Harvard Imagery Lab

Parvo Pathway

The parvo pathway has a very high level of spacial resolution but poor temporal resolution. It allows us to detect color, an objects, form, shape contrast, and texture. And as you might have figured by this set of processed information, this is the signal path we are exclusively using when looking at a stills image and it is all done by the cones which are constantly refreshing the information.

Magno Pathway

On the other hand there is the magno pathway with very high temporal resolution, poor spacial resolution, which allows for us to encode motion and tracking objects in space or helps predict an object’s path, however, this signal path does not process color information. The information can be used for further processing to calculate where an object is moving and along with other information like our ear to give a reference about ourselves, if we are moving or just an object we detected is changing its position.

Both Pathways are used simultaneously but one might weight over the other depending on what we are looking at

The following shows how spacial frequency or motion affects our contrast detection. The more something is moving the less contrast we detect. Lines blur and recognizing detail will become almost impossible at some point.

Information Storage & Renewal

Due to the refresh rate of cones, the lack of storage capacity for signals through the magno pathway every human will have a hard time remembering colors. What we can do is compare colors and reference them to each other. You will never be able to accurately pick one color out of 5 similar colors when not presented a color as a reference.

On the other hand, shape and texture is something that sticks much more easily in our brains, we have learned to recognize shapes and faces from a young age on because it always has been essential to recognize our close ones for our own survival. Parallel signal processing also makes this possible as both signal pathways can be triggered at the same time and depending on how much the object we are looking at is moving one or the other signal path will take more weight in processing the information.

How Does Signal Processing Relate To Color Management?

The issue of having different signal paths and the constantly refreshed information is the factor in color management which makes it hard to compare proof prints to an image on a screen. It is for this very reason that we have to move our eyes from one medium to the other while the information will then be partially overwritten by newly incoming signals. I have seen case studies and research trying to solve this problem using translucent screens wich would allow for a much more direct comparison of color values. Taking out the variable of moving head and eyes to focus on the reference would make comparisons much more precise but the solutions are still not to a level to make their way into the industry. We all have to further deal with moving our eyes to compare and therefore it is still very important to set up a proofing workspace as precisely as possible to minimize all the variables which might come into play

When setting up a proofing spaces the screen has to be set up to match the color temperature of the printed medium and the lighting conditions within a light box. In this scenario, the brightness comes into play whereas calibration a non-proofing station monitor brightness usually is chosen to make working easier on the eyes.

Read more on Color Calibration And Setting Up Your Monitor For Retouching